Contact Centre Reporting

Contact Centre Reporting

A Complete Guide to Contact Centre Reporting

It is both more critical and more challenging than ever to answer the question, “What is happening in my contact centre?” That’s because today, no matter your business, your customer experience (CX) is your business: it’s your signature offering, your differentiator and your key revenue generator. And the contact centre is the home of CX. This puts contact centre performance under the metaphorical spotlight and microscope, as business leaders expect to have a clear, deep and near-real-time understanding of how contact centre performance is helping (or hurting) their business.

But as the simple call centre has evolved into the modern, multi-channel contact centre, it’s become increasingly difficult to gain a firm grasp on performance. The challenge isn’t just just channel expansion. It’s multiple versions of multiple systems spread across multiple locations. It’s unstructured data that’s unusable in its raw form. It’s correlating organisation-wide data. In short, it’s the need to make sense of a trove of data that grows in size and complexity every day.

Facing this challenge, one thing is becoming clear: Most contact centres need a better, smarter reporting solution. They need reporting that breaks down silos to give full visibility across the contact centre—and across the entire organisation. They need reporting that is faster, more efficient and more accurate than manually managing endless “spreadmarts.” Finally, they need reporting that is more intuitive—with KPIs and data visualisations that anyone in the organisation can easily understand.

This guide will give you an end-to-end look at the current challenges and future potential of contact centre reporting and analytics solutions, including:

This Contact Centre Reporting Guide Will Cover:

- What is contact centre reporting?

- What is the difference between reporting and analytics?

- Top 10 KPIs your contact centre should be tracking

- The problem with current reporting systems—the need for better reporting

- Two paths to transforming your contact centre reporting

- Five steps to planning & implementation of a contact centre reporting solution

- Continuously improving your contact centre reporting programme

What is Contact Centre Reporting?

In the simplest sense, reporting shows you what is happening in your contact centre. Reporting takes the many streams of raw data flowing into your contact centre—from your ACD, IVR and WFM systems, for example—and transforms that data into key performance metrics (KPIs). Common KPIs include:

• First Contact Resolution (FCR)

• Adherence to Schedule

• Customer Effort Score

• Net Promoter Score

In today’s technologically advanced world, just about any reporting tool has the capability to turning the raw data into KPIs. However, the art of reporting lies in two factors:

1) How the data is orchestrated into KPIs: This is the formula, so to speak, that a reporting tool uses to calculate a KPI. A better formula leads to more accurate KPIs. More accurate KPIs offer more relevant and usable information.

2) How KPIs are presented to the end user: If the point of reporting is to make raw data easy to understand, then success depends on how KPIs are visualised. End users should be able to understand KPIs at a glance—and immediately recognise when KPIs signal the need for further analysis.

The second point is even more critical today, as business leaders outside the contact centre take an increasing interest in contact centre performance. Traditionally, the users (or consumers) of contact centre reporting have been supervisors, WFM analysts and contact centre leaders. But as organisations increasingly elevate the customer experience (CX) as the core of their business, they’re recognising that the contact centre is the home of CX. Executives and other non-contact-centre leaders expect to see clear, accurate metrics of contact centre performance—and tie contact centre data with other data streams to produce customer-centric, business-wide KPIs.

What is the difference between reporting and analytics?

Reporting and analytics are increasingly discussed in tandem. In fact, they’re often used almost interchangeably. The first thing to understand is that reporting and analytics are not the same thing.

| REPORTING | ANALYTICS | |

|---|---|---|

| What it is: | Turns raw data into key performance metrics that show you what is happening in your contact centre. | Identifies patterns and trends in the data that represent relevant actionable information. |

| Example: | Reporting can tell you that call volume is up and FCR is down. | Analytics can identify a rapid increase in customers calling about an ecommerce checkout issue. |

| The bottom line: | Reporting helps you see what questions to ask. | Analytics helps you answer those questions. |

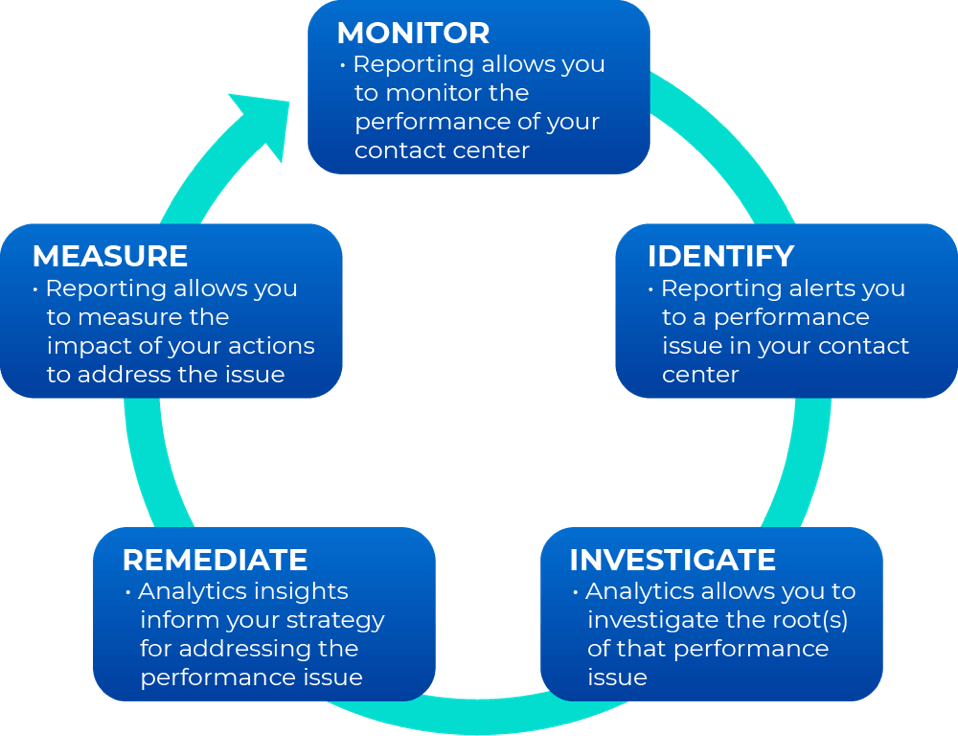

As you can see, reporting and analytics are distinct, but interrelated. In a forward-thinking contact centre, reporting and analytics feed a continuous improvement loop:

Legacy reporting products are product-specific and fail to deliver the omnichannel and organisation-wide visibility you need. But relying on ad hoc reporting—Excel data dumps that turn into out-of-control “spreadmarts—is inefficient and error-prone. Modern, multi-channel contact centres need modern, integrated reporting and analytics solutions that are built to answer complex questions and deliver detailed insights.

The Top 10 Contact Centre KPIs

While every contact centre has unique reporting needs that depend on their line of business, the structure of their organisation and the markets they serve, there are several essential KPIs that just about every contact centre should be measuring:

1

First Contact Resolution (FCR): FCR is the percent of contacts that are resolved on the first interaction with the customer. For live calls or web chats, this means that the customer’s issue is resolved before they hang up the phone or end the chat session. For email, the most common standard for FCR is resolution within one business hour of receiving the original customer email. FCR is one of the most important factors in determining overall customer satisfaction.

2

Adherence to Schedule: Schedule adherence is measured by taking the total time a call centre agent is available and dividing it by the time they are scheduled to work, expressed as a percentage. This metric may take into account time spent on breaks or doing non-call related work. Most call centres define a target schedule adherence percentage allowing for cushion time beyond the scheduled break times. Adherence to schedule is, by far, the biggest factor in achieving an ROI from a WFM platform. Ensuring agents are doing what they are supposed to do—when they are supposed to do it—keeps contact centre costs down while driving up other performance metrics up.

3

Customer Effort Score (CES): CES measures the customer experience with a product or service. Customers rank their experience on a seven-point scale ranging from “Very Difficult” to “Very Easy.” This determines how much effort was required to use the product or service and how likely they’ll continue paying for it. In other words, CES demonstrates how easy your organisation is to do business with—one of the best predictors of customer satisfaction.

4

Agent Occupancy: Agent occupancy refers to the percentage of time that call agents spend handling incoming calls against the available or idle time, which is determined by dividing workload hours by staff hours. It is a statistic used in calculating the productivity of a call centre. This metric has a direct correlation to both customer and agent satisfaction. Occupancy that is too low leads to unengaged agents and lower customer satisfaction, whereas occupancy that is too high leads to agent burnout as well as low customer satisfaction.

5

Service Level: Service level measures how accessible an organisation is to their customers—defined as the percentage of calls answered within a predefined amount of time, or target time threshold. Consistent service levels are one of the first things customers notice when doing business with an organisation.

6

Forecast Accuracy: Forecast accuracy shows the percent variance between the number of customer contacts (calls, texts, emails, chats, etc.) predicted to arrive during a given period and the number of contacts that the contact centre actually receives during that time period. An accurate forecast leads to accurate schedules with the proper levels of staffing. An inaccurate forecast leads to inaccurate schedules, which lead to missed service levels and/or unnecessary staffing expenses. In essence, forecast accuracy is the first step in ensuring you have the right people in the right place at the right time in your contact centre.

7

Abandoned Call Percentage: Abandoned call percentage, also called abandon rate, is the percentage of tasks that are abandoned by the customer—either before speaking with an agent or before completing the intended task. This metric shows the level of frustration your customers have with your organisation. High abandoned call rates mean high levels of customer frustration.

8

Average Call Transfer Rate: This a metric that monitors the percentage of calls transferred to another department, a supervisor, or a different queue. Average call transfer rate also predicts customer frustration, as customers who are transferred frequently will be more frustrated and have lower customer satisfaction.

9

Net Promoter Score (NPS) and Predictive NPS: NPS is a measure of how loyal customers are to an organisation. Customers are asked how likely they are to recommend (or promote) an organisation to others, giving a rating between 0 (not at all likely) and 10 (extremely likely). NPS provides a direct way to measure contact centre performance—and demonstrates the direct connection between the contact centre and the overall brand perception. While NPS is typically measured with a select number of random customer surveys, Predictive NPS uses artificial intelligence and machine learning to provide an NPS value for every customer and every interaction.

10

Quality Score/Predictive Quality: Quality scores show you how are you agents performing against your own internal metrics. Predictive Quality uses AI-powered analytics to automatically evaluate 100% of interactions. This metric, along with the underlying quality form data, should drive your coaching and training programmes throughout the contact centre.

To learn more about these critical KPIs—including best practises for calculating them, as well as common mistakes and misconceptions that lead contact centres off track—download Calabrio’s quick-read eBook: A Guide to Measuring—and Using—the Top 10 Contact Centre KPIs

The need for better multichannel reporting: Why should contact centres change

The first call centres emerged in the 1960s. These early call centres used simple reporting to understand performance—a balance of quality and efficiency—by answering basic questions, like:

- How many calls are we handling every hour—and every day?

- How long are these calls?

- How many customers are calling back for the same issue?

- How many calls result in additional sales?

These questions are the roots of key performance metrics (KPIs) that are still essential to contact centres today.

But a lot has changed in the last 60 years. The simple call centre has evolved into the multi-channel contact centre. Modern contact centres record much more than calls, capturing data on every aspect of contact centre operations. That data is increasingly connected with other critical data streams across the organisation. This reality requires the use of multiple systems and applications, including:

- Automatic Call Distributor (ACD): a telephony software system that answers incoming calls and routes them to a specific agent or department within a company.

- Interactive Voice Response (IVR): an automated telephony system technology that interacts with inbound callers, gathers the required information and routes the calls to the appropriate recipient.

- Workforce Management (WFM): a software system that helps a contact centre manage staff scheduling to maximise performance metrics including service quality and operational efficiency.

- Customer Relationship Management (CRM): a system that helps an organisation manage and improve relationships and interactions with customers and potential customers.

- Enterprise Resource Planning (ERP): software that allows an organisation to integrate and automate business processes and business process management of various functions related to technology, services and human resources.

The basic goal remains the same—contact centre performance still boils down to balancing quality and efficiency. But the factors that drive performance have grown exponentially more complex. Moreover, the modern contact centre already has all the data it needs to understand performance. The challenge today is how to transform that raw data into meaningful metrics—and how to do it accurately, efficiently and as close to real-time as possible.

The problem with current reporting systems

As organisations refocus on the customer experience, the contact centre becomes a hub of business intelligence. Reporting questions come in constantly—from within the contact centre and across the business—and those questions continue to grow more complicated. In the face of these increasing demands, the shortcomings of current reporting systems become glaring flaws:

1

Out-of-the-box reports don’t meet requirements

Typically, most contact centres begin with the standard reports included in their ACD, IVR and WFM systems. However, these out-of-the-box reports usually only cover the basics and likely don’t reflect a given organisation’s unique measurement needs based on industries and markets served, customer needs or geographical/cultural differences.

2

The data is siloed

When it comes to reporting and analytics, each of the systems and applications a contact centre uses (ACD, IVR, WFM, etc.) creates islands of data. Each has a dedicated database optimised for doing one siloed job and generating one set of insights. To further complicate things, many contact centres are managing multiple locations, and each many have a distinct brand or version of ACD, for example, creating yet more silos.

3

Siloed data requires manual extraction

An ACD’s standard reports are designed to show how the contact centre is performing only from the ACD’s point of view. They don’t take into account, for example, how agent scheduling (managed in a WFM system) will affect ACD metrics. In other words, siloed information makes it difficult to answer simple questions, such as, “Are break schedules negatively impacting service levels?” To resolve the situation, most contact centres turn to the trusty old spreadsheet. It’s easy to see why; spreadsheet software (usually Microsoft Excel) is the main tool most managers use to crunch numbers or present data. It’s the tool they know. If contact centre managers are lucky, the application or system they’re using kicks out data in a .CSV format, so it can be uploaded into a spreadsheet. If not, it requires manual entry or a labourious cut-and-paste process. This is all to create one single, siloed report. To determine how agent sheduling is impacting service levels, for example, at least two reports must be generated, and data from each labouriously merged manually in a spreadsheet—a time-intensive process that also fraught with opportunities for error.

4

Reporting expands into messy “spreadmarts”

Business intelligence isn’t a static concept. Leaders don’t want a one-time answer to their questions; they want an ongoing, dynamic look at key CX metrics. So reporting needs that were once handled by a manual, ad hoc report turn into daily reports. Then, weekly, monthly and quarterly reports are tacked on. To save time, the manager just rolls daily reports into a weekly report, weekly reports into monthly reports, and so on. Pretty soon, quarterly contact centre metrics are being referenced in annual organisation-wide performance reports. Spreadsheets built on spreadsheets that are ultimately built on spreadsheets—each of which has to be manually updated.

Requests pile up, one-off reports turn into daily tasks, the cross-referencing gets more convoluted, and before they know it, contact centre managers find themselves overwhelmed by the “spreadmart”—a mind-numbing and costly proliferation of spreadsheets attempting to do the job of a dedicated data mart or data warehouse. In addition to being tedious to produce, the spreadmart does not provide the predictive and prescriptive analytics that every organisation craves today. Contact centre leaders end up spending the majority of their time updating spreadsheets and manually creating metrics—instead of focusing on how to use information and insights contained in those reports and metrics.

5

Data quality and integrity suffers

As errors proliferate in these patchwork-quilt spreadmarts, contact centres begin to lose trust in their stats. Data integrity can also erode as underlying business rules change, or as call flow/call handling protocols are reconfigured, resulting in changes to how calls are pegged in the ACD. A common result is “dueling spreadsheets”—reports ostensibly covering the same metrics, but with different data values suggesting different conclusions.

6

Changes to operational systems disrupt everything

A replacement, upgrade or reconfiguration of any component of the contact centre environment might change the way underlying operational data is logged, with ripple effects on all dependent reports. Adding new technologies (for social media tracking, for example) introduce new silos generating new data that must be incorporated into performance reports.

7

Disconnects arise between strategic goals and contact centre operations:

Changes in management or organisational direction will likely necessitate significant and sometimes immediate changes in the contact centre, and thus its reporting requirements. Evolving goals within the contact centre itself also shift reporting requirements. For example, as workforce engagement emerges as a top strategic goal in many contact centres, new metrics and reports are needed to quantify agent engagement If these changes aren’t made quickly, fundamental disconnects can create constant, unproductive internal struggles between the contact centre and upper management. These clashes can occur over anything from a perceived lack of progress to claims’ conflicting goals and inconsistent direction.

Transforming Your Contact Centre Reporting System

When faced with one or more of these challenges, contact centre leaders often struggle with what to do first in improving contact centre reporting and analytics. Fortunately, this is not uncharted territory—there are established best practises that define two clear paths to transforming your reporting system. The best option depends on the specifics of your contact centre, but both options are a dramatic improvement on the status quo.

Option one: Start with what you know

Reporting frustrations can make a fresh start—building a new reporting system from scratch—an appealing option. But that’s not necessarily the right strategy for every organisation. Sometimes key stakeholders are reluctant to begin a process that could eventually lead to sweeping changes down the line. There are several common sources of resistance:

- IT personnel or analysts: Those individuals that spent days, weeks or even months creating and maintaining complex, macro-heavy spreadsheet reports may have aa hard time turning their backs on the sunk cost of these reports (the time and wages spent making them). They often argue that the incremental cost of making piece-meal adjustments to these reports will be less than a wholesale replacement. While this may be true in the (very) short run, it ignores the significant potential savings of automating current labour-intensive reporting practises.

- Contact centre managers and supervisors: Already feeling overwhelmed by the technology they manage and under constant pressure to meet daily, weekly and monthly performance goals, these managers are understandably reluctant to focus on initiatives that may require them to evaluate and implement new technologies—even if these promise to make their lives easier in the long run.

- Executives: Many organisations see contact centres (particularly customer service ones) as a necessary cost of doing business, but a cost nevertheless. Costs must be minimised, and senior managers may fear that adding new technologies will squeeze already-tight budgets.

Understandable as all these viewpoints may be, the fact remains that as time goes on and the contact centre expands, piece-meal manual reporting becomes more costly and error-prone. The implicit cost of getting good data will ultimately break budgets, and bad data will confound efforts to improve contact centre performance.

The good news for all these stakeholders is that you can leverage your existing reporting system to build a new, better, smarter system. In this approach, your current spreadsheet-based reports are re-purposed—not tossed away. Despite their flaws, spreadsheet reports have the virtue of being a very accurate reflection of your contact centre’s current reporting needs. As such, they make excellent blueprints to guide the next evolutionary step in the process—eliminating manual effort through automation of data collection and consolidation.

Advantages of the “Start Small” Approach

Under this gradual, “start small” approach, the only major decision is choosing the proper enabling technologies to completely eliminate the need for a human to cut and paste data into these reports. In fact, limiting the scope of an initial project to this simple goal alone has two advantages:

1. It’s clearly understood by all stakeholders

2. The resulting benefit in saved time and money is easily quantifiable

With the right technology, these quick wins can be achieved easily while providing the foundation for even bigger gains from a more comprehensive review.

Another benefit of building on your existing foundation is giving analysts and managers breathing space to re-examine and refine metrics and calculations used in existing reports, evaluate whether these need to be adjusted, and to fine-tune report formatting. With new technology eliminating human error from the data consolidation process, managers can even take a retrospective look at previous reporting periods with fresh, accurate data to revise any assumptions or conclusions that may have been made based on erroneous information.

How to Audit Contact Centre Reports

A comprehensive review also provides the opportunity to test existing reports’ relevance to the contact centre and its stakeholders. Some reports may have outlived their usefulness. For each existing report, ask some value-testing questions:

- To whom does this report matter? Do those stakeholders agree it matters? Do they understand it?

- What actions do we take based on this report? How does it help meet our goals? What would happen if we didn’t get it?

- Do we need to manually match this report with another report to answer the questions we’re asking?

- Is the information in the report formatted in a way that promotes understanding and action, or is it just showing some numbers? Would graphs or charts help?

- Are we getting this report often enough? Too often?

- Are we seeing cumulative data (e.g., quarterly, calendar/fiscal YTD, etc.) expressed in terms that are useful to our particular needs?

- Do I have to run this same report multiple times for different contact centre sites?

- How are we getting this report (email, web, a photocopy left on office chairs every night)? Is there a better distribution method?

- What other ways can this report be improved?

These questions can be asked not only of existing reports, but also for the measurements they contain: Metrics, formulas, custom calculations and even system calculations should be validated in this way to make sure all stakeholders agree on their ongoing value and accuracy. Though the verbal definition of service level—x percent of calls answered in y seconds—will be familiar to all concerned, its underlying formula has a half-dozen or more known variations. While it’s not necessary that every stakeholder understand how it’s calculated, it is important that a single formula be applied universally.

Even if you’re an outside-the-box thinker stuck in a slow-and-steady-wins-the-race organisation, rest assured that this conservative approach can lead to strong long-term results. With smart technologies powering their current reports, analysts and managers will inevitably discover the richness and variety of new information available in the contact centre’s underlying operational systems. Reluctant stakeholders will realise that all the tools they need for broader and deeper reporting and analysis are already at hand; all that’s needed is the determination to take the next step: a formal review.

Option two: Start by setting an end goal

Some contact centres don’t have the luxury of time required to take the first, more conservative approach. Often, the impetus for the contact centre change is a much more time-sensitive issue, such as:

- A key operational system is added, replaced or upgraded

- A second agent site is added

- A corporate merger leads to a sudden and urgent integration of two completely different call centre architectures

- Senior management prioritisation/initiative to increase productivity or cut costs

Whatever the reason, managing a more time-sensitive reporting transformation requires an approach focused on a defined end goal. This approach requires more upfront planning and a structured, top-down process centred on key goals that drive every subsequent leg in the journey.

Five Steps for Planning Contact Centre Reporting

Whether you’ve arrived at a comprehensive review as part of your time-sensitive, strategic initial transformation, or as the next phase following a more conservative, build-on-what-we-have approach, the process is the same. In general, there are five phases in a complete review of contact centre reporting and analytics. First, the scope of the project itself must be clearly established. Second, requirements must be gathered and defined. Third comes the design process, which will likely have several iterations. Fourth comes the crucial technology selection, followed by the fifth and final phase: implementation and review.

Step 1: Determine the project scope

To stay on track, any formal project needs scope—big or small. A project can be defined as narrowly as “merge average-speed-of answer stats from these three different ACD systems into a line-graph report,” or as broadly as “build agent, team and company-wide scorecards on seven key performance indicators, to be refreshed hourly in an at-a-glance dashboard.” Other aspects to include in project scope include:

- Timeline: Identify a timeline for completion. Open-ended projects tend to eventually sputter to a standstill without the urgency and structure of deadlines.

- Key stakeholders: Agents, supervisors, IT staff, executives and, in some cases, customers or partners need to be identified at this stage as well.

- Budget: Financial resources are often allocated at this point—or this may come later, when enabling technologies are evaluated.

Pick your team captains

There are three critical roles that should be clearly assigned at the outset of the project:

- Project champion: To be successful, such a project should be driven by a data-savvy champion—someone who knows the value of data integrity and believes that technology can be applied successfully to the challenge of automated reporting and analytics in a complex contact centre environment. Often, this champion is a senior executive in operations or IT who can make the business case, secure the funding and appoint the right people to manage and execute the project. Note that the role of champion is different from the role of a hands-on project manager. If the champion is the driver—keeping the foot on the gas and the car on the road (and upright!)—then the project manager is the navigator that ultimately keeps the project in scope, on deadline and on track.

- Project manager: A project manager tracks tasks and deliverables along a fixed timeline and keeps all stakeholders focused on the ultimate outcome. Those stakeholders will include people actively involved in the contact centre (agents, supervisors and analysts) and those indirectly involved, such as system administrators, database developers and other IT specialists. All have a mixture of priorities and motivations. Without a project manager keeping an eye on alignment, a review of even modest scope can drift off track.

- Data interpreter: Besides the champion and the project manager, there is another crucial role to be filled in a review of contact centre reporting: the data interpreter. Some key stakeholders (agents and their supervisors particularly) will define key reporting metrics in business terms. Others (contact centre systems and database admins) will define these metrics from a “data” viewpoint. There is often a gap between these two definitions that goes undetected as each assumes the other shares the same assumptions. This disconnect may persist through the design, development and implementation stages and ultimately lead to frustrating and time-consuming review and re-work. The data interpreter—usually an IT resource who understands the operational systems and their underlying databases—can help the project avoid this problem by bridging the gap: translating the required key performance indicators from “business speak” into “data speak” that can in turn be embedded in the new reporting solution.

Step 2: Gather and Define Requirements from Stakeholders

By definition, a review of contact centre reporting and analytics is a reassessment of key stakeholders’ requirements. It’s also a great opportunity to make sure the contact centre is conforming to the goals of the organisation as a whole. The most powerful way to accomplish this is to derive the contact centre’s top-level requirements from strategic targets and goals set by the organisation’s leaders.

An hour spent with the vice president of sales and marketing, the chief financial officer or the CEO can go a long way towards defining what needs to be accomplished. While this may sound daunting, most high-level strategic goals can easily be boiled down to specific, measurable actions in the contact centre.

For instance, if a financial institution’s strategic goal is to move into a new market category (say, home insurance), then the contact centre’s goal may be to improve metrics and reporting for cross-selling by customer service agents handling mortgage inquiries. Similarly, for an online retailer of computers that wants to win market share from competitors through better post-sale customer service and technical support, the contact centre goal may be to improve customer satisfaction metrics and reporting.

In many cases, strategic goals lie partly or completely outside the contact centre’s control. Stakeholders in other functional areas of the organisation—marketing, product development, finance, human resources and other back office departments—should be consulted on what feedback or metrics they need from the contact centre to refine their own plans and programmes.

Finally, there are the contact centre’s internal operational goals. Some contact centres want their agents to be more productive, so reporting will have to gather and consolidate accurate data on handle time, talk time or after-call work time. Some want to be more effective in the interactions agents have with customers, so first-contact resolution or customer satisfaction ratings will be key metrics. Most will want to gauge how all the moving parts of the contact centre—agents, management and technology—are working together, so service level, average in queue and/or on hold times, average speed-of-answer and abandons will be critical.

Gathering Reporting Requirements

When gathering requirements from stakeholders, it’s important not to shackle their choices to lists of known or existing metrics or reports. Rather, ask each stakeholder group to compile a prioritised list of information, expressed in business terms, which they would like to see from the contact centre. Not all of these “wish-list” items will be achievable, but the lists will help clarify each stakeholder’s needs and suggest new metrics or measurements that may be possible with new reporting technologies.

Such user-driven consultation also helps with stakeholder buy-in. Asking for requirements to be expressed out loud in business terms—or just plain old English—is useful in another way. Consider the difference in the two questions below:

“Can I get a daily report that shows Not Ready Time, Talk Time, Idle Time and Break Time?”

This question is all too easily answered with a canned report showing each of these metrics in hours, minutes and seconds. These metrics then have to be compared (often manually) to shift times (from another report) before they are of any use.

VS.

“How are my agents splitting their time among the various activities that they do within their shifts—whether it’s assigned work or not?”

This question, expressed in plain English, could easily be answered by a single, more meaningful report with trended data shown as proportions (say, 60% inbound, 20% breaks, 10% training, 10% unaccounted for) which a supervisor or coach could understand at a glance—no assembly or decoding required.

Eliminate redundancy

Look for ways to consolidate many reports into a few—or just one. If users currently receive multiple reports summarising the same data (by skill set, queue or application, or by time or geography), make eliminating this redundancy a key requirement of the review. The right reporting solution will provide ways to interact with a report to show (or hide) data as needed, and to navigate through standard hierarchies of data (year/month/week/day) within the same report. It will also allow your contact centre’s unique hierarchies (site/team/agent, or custom geographies) to be represented as well.

The requirements list should also include any custom or unique calculations that users or analysts may be performing by hand or in spreadsheets. Every contact centre serves a unique customer segment, market, industry and/or geography, and “standard” lists of metrics can never capture everything a given organisation needs to measure. A new reporting solution should automate these custom formulas and apply any constants (cost-per-interaction data, loaded labour rates, occupancy or service level targets, or other performance objectives) needed in their calculation or presentation.

Expand requirements

Don’t be restricted by the old limits on reporting imposed by operational data silos. If supervisors or coaches need an agent scorecard combining data from ACD, screen capture, quality management and workforce optimization or workforce engagement systems, then add a mock-up report to the requirements. It’s the reporting solution’s job to tap into the relevant data silos as needed—and to present that scorecard as though it came from a single, all-knowing system. Similarly, if analysts have struggled in the past to build end-to-end call audits from raw ACD and/or IVR data, then this should be a determining requirement for a new reporting technology.

Step 3: Create a comprehensive mock-up of your new call centre reporting system

With complete requirements and stakeholder buy-in, it’s time to begin designing actual reports. Again, spreadsheets are a useful tool to create mock-up reports. Most stakeholders have the skills to view and at least make cosmetic changes to spreadsheet mock-ups. These should circulate to a design team with at least one representative from each stakeholder group. A report should only move to the implementation phase when all affected stakeholder reps have signed off on it. Each contact centre’s report mock-ups will be different, but here are some key guidelines that should pertain to all templates passed to the design phase:

1) Document sources: Beyond layout and formatting, these mock-up reports should include notes about from which operational data systems each metric should be drawn—ACD, IVR, WFM, WEM, CRM, etc.—and which custom formulas or calculations need to be applied in the presentation in the report itself. Cumulative metrics should also be highlighted along with any special rules governing them (fiscal YTD, etc.).

2) Note calculations from source systems: Remember, many of the metrics pulled from operational systems like the ACD are actually calculations, with formulas underlying them. If a new metric is created from the combination of two or more calculated statistics, the operational source of each constituent metric should be noted along with their respective formulas or calculations. This is particularly important in mixed-vendor environments, where a standard metric like service level may be calculated differently by different systems.

3) Check your frequency: Note stakeholders’ required frequency for each report refresh (intraday, daily, weekly, monthly, quarterly,e tc.) and whether previous editions of each report should be maintained separately (i.e., not overwritten when updated) and kept accessible for future reference. Your reporting solution should give you the option of filtering report data based on date, making a historical archive of past reports redundant.

4) Determine distribution and scheduling: Each report mock-up should note who will be receiving or accessing the report and through which distribution channel. Some users may simply require a PDF or spreadsheet attachment via email; others may require access to a web portal for more interaction with report data. Avoid sending reports to stakeholders who don’t need them.

5) Keep it simple: Don’t try to include too many metrics in a single report. Each report should support a single management action or decision, or a group of related decisions. For instance, scorecards used to coach individual agents should include metrics that are under the agent’s control (not-ready time, average talk time, attendance, etc.) and relevant independent variables like calls per hour, but not top-line measures like calls offered, agent utilisation or abandon rates.

6) Avoid dueling metrics: Some contact centre measures clash with others, pulling managers in multiple directions. If managers decide that first call resolution is a customer service priority, then setting low targets for average talk times will actually hinder agent and team performance. Arbitrary call-time targets will also hamper sales-oriented centres that reward higher revenue-per-call or conversion rate (the percentage of inbound or outbound calls converted to a sale). Avoid this conflict by keeping reports relevant to one actionable decision or group of related decisions.

Step 4: Finalise your contact centre reporting and analysis architecture

The first three phases of a comprehensive review of contact centre reporting and analytics can—and often should—be completed before meeting with vendors to select enabling technologies. The goal is to define your needs—your ideal list of reports and metrics—and then find a solution that enables you to meet those needs. If a vendor is introduced into the review process too early, their technical limitations may inherently limit the report requirements and design, and the whole project may ultimately fall short of its potential.

There are three solution categories that have evolved to meet the challenge of better reporting and analytics in the contact centre:

1. Report extension tools

Sometimes called point-to-point tools, report extension tools are offered by many of the same vendors who manufacture the contact centre’s operational hardware and software. These systems almost always feature built-in, standard, “out of the box” reports for a given system like the ACD, as well as report-authoring tools that analysts can use to create their own custom reports. In skilled hands, these tools allow data to be imported from other systems and data stores. Similarly, vendors of real-time dashboard, workforce optimization, workforce engagement and quality management software offer reporting tools that may be used to access other data sources.

2. Performance management suites

These solutions usually offer reporting services as part of a much wider array of applications, including workflow management, coaching, contact type profiling, agent recognition and compensation.

3. Business Intelligence (BI)

BI is a software architecture designed specifically for reporting and analysis of information from operational data systems of any kind—point-of-sale, manufacturing, banking and finance, sales and marketing, or any other computerised system with an underlying database logging transactional data.

Step 5: Implementation, testing and review

Defining your reporting architecture and selecting a vendor is just the front half of the process of transforming your contact centre reporting system. An intensive testing and review process is still critical to a successful implementation—even if your vendor takes full responsibility for instaling and configuring all the required software, as well as developing all content based on your mocked-up reports,

The Testing and Review Process

Testing and review is typically an iterative process:

Develop Alpha Reports

Early “alpha” versions of each report will be generated with smaple data and distributed to internal analysts for data validation.

Hone Calculations & Formulas

Alpha reports get recirculated back to report designers for corrections in calculations or formulas.

Report Formatting/User Experience

Once the numbers are right, designers move on to report formatting tasks like adding charts and graphs, improving the look of tablets and generally improving the user experience.

End-User Testing & Review

Once reports have achieved a certain polish, it’s time to expand the resting and review process to include end users. These reviews should consider how the reports look, how their interactive features work, how they print out and most importantly, how useful they are.*

Stakeholder Approval

Usually it takes a few testing and review iterations with all stakeholder audiences before reports are ready for implementation and day-to-day use. As with report mock-ups in the design phase, represtatives of each stakeholder group should (literally) sign off on each report before it is put in production.

Ongoing Refinement

Once reports go live in the wider contact centre, stakeholder should still expect one of two hiccups as final configuration issues are worked out. Representatives from each stakeholder group should document any errors or unpredictable behaviour they experience in using the new reports.

* NOTE: Involving these stakeholders too soon in testing can be a mistake. Non-technical users typically can’t add much value to early data validation, and it can be difficult for them to shake a bad first impression made by a report containing ratty or nonsensical data. It’s best to wait to solicit end-user feedback until all they’re reviewing is the day-to-day utility of reports.

Function should define form

If your software vendor is tasked with report design, don’t let their enthusiasm for fancy new technology take your project off course. Just because they can show 16 different metrics represented as speedometers in a dashboard with a 15-minute refresh doesn’t mean they should—unless your requirements process identified that need. The same rule applies to internal “power” users with advanced skill sets who may be understandably excited about what their new tools can do. There will be time enough for groundbreaking innovation once the first wave of reports meet documented requirements.

Continually Improving Your Contact Centre Reporting Programme

Whether your contact centre takes the build-on-what-you-have approach or immediately undertakes a comprehensive review and rebuild, the ultimate sign of success is the same: when you’re getting the reports you need, filled with accurate data that’s useful to you, in a timely fashion—without being burdened with manual work to patch gaps. At the very least, following the guidance provided here should lead you to a point where you are free to spend more of your time and effort on improving your contact centre’s performance, rather that chasing bits of data down dead ends.

But though re-engineering your contact centre reporting programme will deliver significant and ongoing benefits, there is no true endpoint to this process. The contact centre ecosystem continues to expand in size and complexity—with emerging channels like social media, new switching technologies like SIP and new innovations in performance management techniques. This evolution isn’t just constant—it’s accelerating. And these changes will bring new reporting needs and create new challenges in meeting those needs.

Fortunately, these future challenges won’t be all that different from those currently facing many contact centres: bringing together disparate data streams, harmonising those data streams, and orchestrating that integrated data into relevant, usable reports and KPIs that show you what is happening in your contact centre.

To that end, the best practises covered on this page will remain the best practises for tackling the contact centre reporting challenges of the future: defining project scope, identifying stakeholders, gathering requirements and creating mock-up reports that express them, choosing a reporting architecture that can meet reporting needs now and in the future, reviewing, testing, and finally implementing a solution. Follow this guidance, and you’ll be on your way to more accurate, more usable reports—and more time to focus on using those reports to drive the performance of your contact centre and the success of your business.